Yes, basically. But it is more complex and there is an advantage of having more pixels than scene/lens resolution. A simple analogy...

In theory it would only require 64 pixels to record the 64 squares on a chess board. But for that to happen the lens projected squares would have to fall exactly on each sensor pixel, and that is

extremely unlikely to happen.

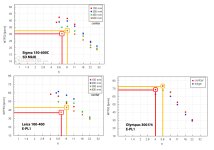

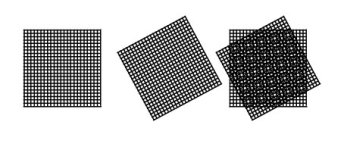

And if the patterns are (nearly) the same size misalignment results in aliasing (moire), like shown in this drawing. Sensors of lower resolution are equipped with an AA filter to help prevent this (by slight defocus)... where sensors of high resolution make it very unlikely that a scene/lens will resolve down to the pixel size, and therefore can omit the AA filter. This is what is known as oversampling... more samples (pixels) for more accurate results.

And increased oversampling is why a higher resolution sensor almost always generates somewhat better recorded resolution; of those scene details that were previously just out of alignment. But the increase is really only significant if the original sampling (pixel pitch/sensor resolution) was inadequate/barely adequate; the law of diminishing returns.

View attachment 377739

Similarly, if the squares of the chessboard were in color, then in order to get the most accurate

color information you would want each square to be sampled by at least one RGBG pixel quad. If instead a color square (magenta) was only sampled by a single pixel (R,G, or B) then it's actual color, and luminance, has to be guessed based on surrounding information/pixels.

So the question is "full capability of utilizing the D850 sensor in what capacity?" But in just terms of "matching dots" and recorded resolution, there are few lenses that can; and the ones that can can only do it at a very wide aperture setting (due to diffraction).

Interestingly, in the circle of "cropping the scene" a larger sensor of the same resolution (MP) is less demanding because it has larger pixels. But it is simultaneously equally more demanding because it requires a lens of greater magnification to record the same composition... and that causes increased diffraction/CA/etc. After a certain point, making a longer lens that is equally as sharp is extremely difficult to do. As I said before... no version/form/stage of cropping has a definitive advantage.

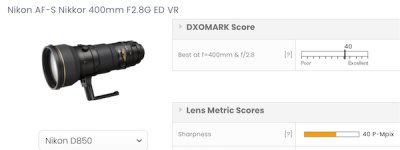

I like DXO's reports... it is easy because it allows you to compare different lenses on the same body, or different bodies on the same lens. And that is important if you want to directly compare results without a lot of additional information/math. And it converts a measured MTF (lines per mm recorded) into the MP's required to record those lines (matching dots; "perceptual MP's"). And while they measure recorded resolution a bit differently than others (i.e. it's not a simple MTF 50), it

is empirical and consistent.

View attachment 377744

DXO almost always says a lens is "best at" its max aperture because diffraction is such a big factor with modern sensors. But in strictly MTF terms (sharpness at a given contrast level) it's usually at least a little better stopped down some.

But it also helps to know that a human cannot even see more than ~14MP in an image when viewed normally (as a whole image)... just as you cannot see the dots that make up the image on your screen. And that when an image looks obviously lacking in IQ it probably has less than ~ 6MP of actual resolution recorded. Hell, most images are posted/viewed at less than 4MP resolution (1024-2048 long edge). That's why DXO sharpness graphs look the way they do (color coding, stops at 12MP).

View attachment 377743

In other words, in a lot of (most) cases chasing these technical details/advances/differences makes very little difference relative to the actual need and benefit.

A simple example is that it is often more beneficial to stop down in order to record a greater number of larger details more acceptably in focus (increased DOF), even though that actually means a lower total resolution is recorded (loss of smaller details due to increased diffraction).